Table of Contents

Pattern Recognition and Machine Learning

Don't worry too much about “which one is best”, take one and go all the way through! I think breadth is better than depth for a lot of things.

- Advanced Analytics with Spark: Patterns for Learning from Data at Scale has some great application ideas.

- Pattern Recognition Very good overview paper on Pattern Recognition by Robi Polikar at Rowan University

- Machine Learning links from NoiseBridge

- Udacity Data Science classes look good too.

- Varsha / Feras at Edwards recommend The Elements of Statistical Learning (Hastie) (FREE BOOK) and solutions, MLAPP, and Applied Predictive Modeling

- Dannenberg paper on bootstrap learning for note onset detection using neural networks.bootstrap2006.pdf

- University of Washington has a cool information retrieval project that pulls from Tom Mitchell's CMU library and Freebase to answer some questions. Github

- Talked with Norm at Intel about machine learning. The HP project organizes compute around memory, instead of memory around/next to compute.

- Also, the intel NVRAM is going to really reduce RAM refresh power on small devices. Will have to lower power usage some to get there though.

- Intel internal deep learning model trainer.

- Guy from PGE met at robotics tournament is Alex Banicki.

Deep Learning Playground

http://playground.tensorflow.org/. So simple and interactive. Also, need multiple layers for spiral, but it does it!

Fast.ai course

Lesson 1

The higher layers seem to learn specific examples of people/cats/dogs/etc. Does the network transfer well then?

- Is it just summing up the results?

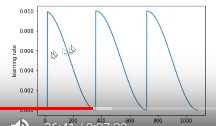

Setting learning rate. You want to find a good one as you'll be re-training your models as you grab more data.

Lesson 2

Stochastic Gradient Descent with restarts is better than ensembles because it allows one to stay in “wide” / generalizable minima rather than restart to find another minima that might not be wide.

- I thought he said that the descent rate changed over time automatically because it was a learning rate times the gradient, which scaled smaller as we get closer to bottom. So still don't see why cosine annealing is needed. But apparently it's better.

Also, the initial training, what was it training? It wasn't modifying the precomputed weights, so what was it doing?

- precompute=True/False, Freeze=True/False

- Discussion on forum, http://forums.fast.ai/t/precompute-vs-freezing/7511/8. Would like to understand, but hungry right now.

Lesson ??

Still want to make a continuous output thing. Train with simple thing like sine wave amplitude or frequency and maybe combine them. Then move to something complicated like the sales prediction, data center load prediction, or better the stuff that Levi is doing at BPA.

Winning kaggle submissions: https://www.kaggle.com/sudalairajkumar/winning-solutions-of-kaggle-competitions

Classifying ML tasks

From Turi Create documentation: https://github.com/apple/turicreate

| ML Task | Description |

|---|---|

| Recommender | Personalize choices for users |

| Image Classification | Label images |

| Object Detection | Recognize objects within images |

| Style Transfer | Stylize images |

| Activity Classification | Detect an activity using sensors |

| Image Similarity | Find similar images |

| Classifiers | Predict a label |

| Regression | Predict numeric values |

| Clustering | Group similar datapoints together |

| Text Classifier | Analyze sentiment of messages |

Talk with Andres

Talk with Andres:

- What drives you? Do you have a goal in mind?

- What sort of things are you doing now? Hardware design?

- While I enjoy doing machine learning problems, I'm having difficulty finding applications that I can do really well / solve and I know it's a lot of work.

- I find I am liking being employed more than working on cool (to me) projects. But…they are much easier too. Hopefully I can get them both, but is there really that much demand for machine learning people making … real stuff?

- Isn't there more demand for people getting stuff done than the movers on the top of the food chain?

His thesis was combining correlation filters (MACE) and SVM's.

He's in charge of AI strategy for the data center.

It's not going to slow down, even with economic downturn. There are more and more 2nd tier companies finding uses for things. In addition to the 1st tier guys

It's working super well. Which would you prefer for your robot doctor? A robot that will get it correct 80% of the time but tell you why it failed or a 99% right robot that you don't know so well how to diagnose?

Recommends starting with Kaggle things and moving from there. That's your “rep” in the industry.

Facebook machine learning data center, (deep neural networks), published 6 months ago

- If you can get away with a simpler model, then do so. Lots faster/cheaper to train and evaluate. They use several, not just SVM or whatever.

Random forests are used a lot in production, surprisngly.

Coursera specialization course by Andrew Ng

Google has a cool demo on an automated phone calling system.

Designing the DNN model is still an engineering exercise at this point.

Turi, Carlos Guestrin's startup got bought by Google. Previously he was a machine learning only guy.

Adversarial neural networks, you can come up with the same thing for normal machine learning methods too.

I feel excited. Ready to apply machine learning / DNN to some real problems and see what comes out.

- However, still kinda scared as I am not confident in my ability yet. Kaggle it up! Just like the next abstraction of programming skills.

Deep Learning

Apparently is blowing every other method out of the water. Unfortunately, we don't understand why it works yet, and so we have to sacrifice the lives of many grad students in order to find local optima ![]()

- With 10 layers, you can do anything?!

- Stochastic Gradient Descent (SGD) is the big win that makes it work

Fizz buzz with a neural network: https://news.ycombinator.com/item?id=11753627

Work

- Maybe work for Trimet and analyze their data?

- CMU Auton Lab did analysis of sensors on navy helicopters to try and get forewarning of rotor aging and repair-needing. Maybe they published some papers too…but probably not for this one.

Do Simple Examples

- Neural networks are more complicated, but they can outperform other methods. quora.

- Apparently neural networks get better with more examples

- Techniques for increasing training examples include all the affine transformations. Stuff that typically shows up with consumer photography

- Can Neural Networks / Deep Learning figure out good features automatically?

- Arterial blood pressure signals often use the slope sum function, but can't a computer figure it out too given lots of examples?

- Alex says he uses SSF and others because it is pretty deterministic and predictable / based on physiology and pretty small/efficient in time and space on a microprocessor. Other, more “learning” style methods might not be that way, especially neural networks.

- Shape Catcher / Detexify does shape recognition on characters in HTML5, pretty cool! (but pretty slow too…)

Numbers

- Generate different classes based on random things. (last digit, prime number, last digit of sum of last digits) and see if the computer can figure out the classification. SVM??

RGB Colors

- Classify RGB tuple invariant to lighting

- (maybe make a larger swatch and add noise?)

| Experiment | Conditioning | Feature Extraction | Classification |

|---|---|---|---|

| Classifying Number Patterns | |||

| RGB Color Recognition (invariant to illumination) |

Signal Conditioning

Feature Extraction

Handwriting

- OpenCV 1 and feature detection sequence

- They use a png for digits, but then a pre-computed set of features using a UCI dataset, which has a very fast looking set of features that OpenCV uses for ocr. I wonder how they came up with them…

- MNIST handwriting dataset

Finding Good Features

How does one find features that are robust to Scale/Time/Space/Rotation?

- OpenCV does this with kNN and SVM for handwriting recognition (docs if they help). Somehow it does multi-class SVM???

- Tesseract (2?) is another option, but seems heavier.

- In general for machine learning, one should say the uncertainty of the guess for the user to use.

- This should be very similar to Can Ye's work on arrhythmia detection at CMU.

- Can Neural Networks / Deep Learning figure out good features autonomously?

Classification vs. Regression

Parameter estimation = Regression = Continuous value as output

Classification = “Thresholded” version of Regression (binary classification as output)

Estimate Parameters of Signal

Multiple_Signal_Classification (MUSIC) algorithm seems really cool. Uses eigenvectors of autocorrelation to find frequencies of k emitters. But what if my emitters aren't sinusoidal?

Amazon Interview

I was quite weak on boosting, clustering (finding n classes among a bunch of fingerprints), and machine learning on decision trees (signal processing and recognition using decision trees?!?! Never heard of it).

The Unreasonable Effectiveness of Data

The Unreasonable Effectiveness of Data, by some Google guys including Russell Norvig. Combining lots of simple classifiers or n-grams into one big system is performing better than elaborate models on less data.

“What vegetables prevent osteoporosis” paper looks immediately helpful for reading project.

The same meaning can be expressed in many different ways, and the same expression can express many different meanings.

- Semantic Web helps solve the ambiguity problem, especially if all of the correct forms are filled out.

Questions

SVD/PCA

- What is SVD again? I get really annoyed when people say “we skipped the first two vectors, because they represent background noise (lighting variation usually), and used the later vectors”. Why is that the case? It seems a bad heuristic for using SVD. Maybe clean up your data in other ways first, as you might miss something when you remove the first few vectors!

- Fernando De La Torre had a great set of slides for MLSP. He had a method called Robust PCA that handled the above issue in filtering out the video background and highlighted events in the video.

- A CMU professor came to Edwards and said that they are transforming raw pressure and other biomedical data into the frequency domain (through FFT) and then doing SVD on it! That seems useless, because FFT is just changing the orientation of the data, and SVD is finding the best representation of the data. Maybe there is something that I am missing?

Tutorials w/ Examples

- SVM Intro very intuitive and graphical

- SVM Examples (Dan Ventura's one is particularly good)

- Andrew Moore (Google) tutorial slides very informative and funny actually too! On lots of stuff, but no examples :( He knows why and why not though

Remote Photoplethysmography (FaceTiming/CardioCam?)

- Maybe use SURF along with LK point tracking for more robustness to hand swipes? YouTube video

IR Details

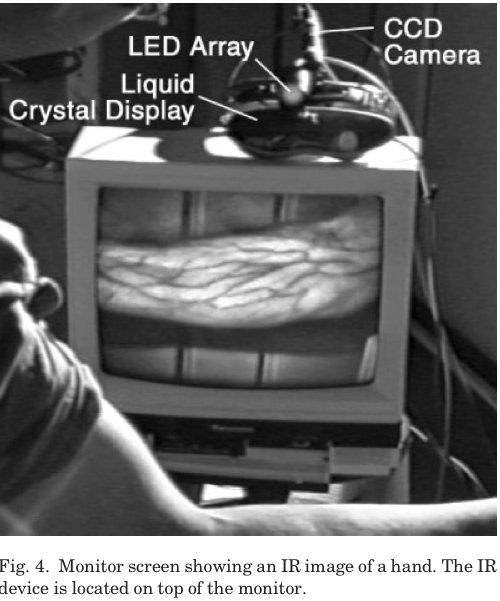

- Great paper on getting good pictures of veins with IR is here: Infrared Imaging of Subcutaneous Veins

- Might need to remove IR filter as veins show up better in IR spectrum?

- Use Macam for external Microsoft webcam on OS X

- (really slow though…)

- Blood Oxygenation level based on “ratio of ratios”, or the “ratio between photoplethysmographic peak-to- peak pulse wave amplitudes acquired at ≥2 different wave- lengths”. Green and blue/red?

- HSV Color Space explanation

- Get heart rate monitor from QoLT?

- Utility of photoplethysmogram (talks about correlating with blood pressure, need to read more

- Based off of CardioCam

- Might need to use FastICA, example use code

imaqtool; %Gui %Mac specific settings vid = videoinput('macvideo', 1, 'YCbCr422_640x480'); src = getselectedsource(vid); vid.FramesPerTrigger = 5; preview(vid);

- Overall, much slower than Processing, but much easier to do complex calculations…

Ideas

- Remote Monitoring of Pulse. CardioCam, their paper, previous paper,Notre Dame summer REU, seems to not have worked?! Where was that flash demo…

- Randy: If you're capturing webcam imagery, might be fun to think about other sorts of things too like how open the eyelids are, how often the eyes move, how fast the head moves etc.

- Analyzing large biomedical data sets quickly with machine learning and pattern recognition. Wired Article. Paper by professor. still need to read it…

- Google Search: Machine Learning Projects

- I kind of like doing genetic algorithms and evaluating them

Features

- Audio: Marsyas, 60-some features for audio and some cool classification demos

Data Acquisition / Processing

- Orange open-source, data mining, cross-platform in Qt

- Google Refine was way cool! Mostly for cleaning up datasets, but really useful.

- Junar , graphical data acquisition, doesn't really work for Craigslist, needs tables? Meh…

Websites

- Onionesque Reality blog by graduated student. Talks and has tutorials on eigenfaces and Support Vector Machines

- Andrew Ng homepage Professor at Stanford

Textbooks

- O'Reilly Data Analysis book

- Christopher Bishop's http://www.amazon.com/Pattern-Recognition-Learning-Information-Statistics/dp/0387310738Pattern Recognition and Machine Learning (required for Neural Signal Processing)

- Seems to be pretty mathy from the reviews

- An alternate book (more introductory): Machine Learning An Algorithmic Perspective

-

- Not sure which book we need for Patt Rec, but this one has good reviews.