Table of Contents

Alpha Beta Pruning

- Only useful for minimax game trees. But great intro

Make sure to really test your algorithm

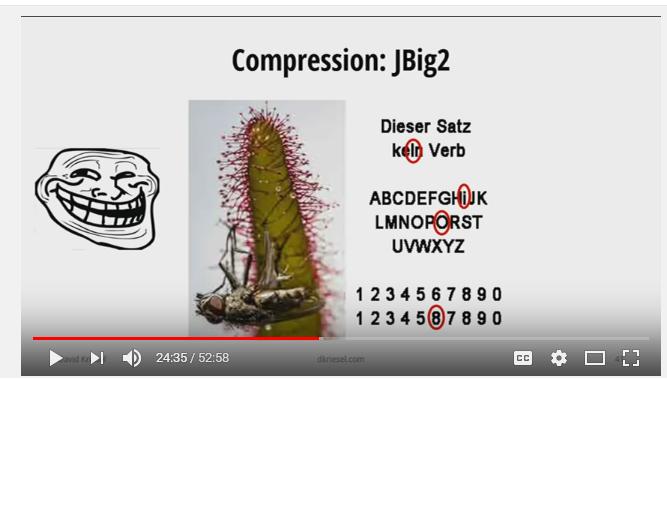

Xerox and JBig2.

On the scale of things too horrible to contemplate, “document-altering scanner” is right up there with “flesh-eating bacteria.”

Visual Algorithm Explanations

http://bost.ocks.org/mike/algorithms/, http://algs4.cs.princeton.edu/home/

- Great site that animates and visualizes various algorithms for better understanding.

Persistent and Distributed Web

Bret Victor has some ideas: http://worrydream.com/TheWebOfAlexandria/2.html. Links to IPFS, and it seems a similar idea is Ethereum.

Lanuage Processing (NLP) Algorithms

Google Auto-correction algorithm

- Used on Google Wave but not Google Docs?

- Is it as simple as what n-gram is more likely? Why is there more to the paper?

Algorithms that DARPA uses to find people on the “dark web”. It's called “memex”. Open Source Software, Forbes article (kinda NSFW)

Nice analysis on word2vec and how it is a more complicated version of matrix factorization problem. here

Inverted-index can use trie or hash lookup. I would think trie would be easier for looking at similar terms (just hash the new term again), but it takes up O(26) for each pointer on each level, so O(26^n)

Model Fitting

LandTrendr

Picking up similarities in forest decay, etc, in the midst of noise. landtrendr.pdf

Walking Animation

Another simplified walking controller , by Adrien Treuille in his PhD. http://grail.cs.washington.edu/projects/graph-optimal-control/

A really cool walking simulator / animation tool that allows you to continuously move between different parameters in motion capture data. What's interesting is how they implement it! (eigenwalkers ![]() ) eigenwalkers.pdftrojechapter07b.pdf and wdp2002_troje.pdf

) eigenwalkers.pdftrojechapter07b.pdf and wdp2002_troje.pdf

Dynamic Programming

Dynamic_programming is very helpful! Basically, DP computes most all of the possibilities and picks the best one?? (very inefficient-sounding) I thought there were overlapping subproblems though…

- Also, Bellman invented it, and there's something about the recursive control-theory-oriented Bellman_Equation that is related. But I can't put my finger on what…

- Continuous Time: Calculus_of_variations

- Discrete Time: Bellman_equation (Bellman papers bellman_calclulusofvariationstodp.pdf and bellman_dp.pdf), with its corollary as the Kalman_filter. It says if you know the formula for the function space, you can compute the optimal control, even in a discrete way.

Use the details of the problem space to your advantage. For example, if you want to get from point A to point B <far away> on a map, you only need to use the interstates for most of it (you don't have to compute for all of the roads in the middle), and getting the average distance of the houses in a city is fine for most of it.

Traffic Lights

Or just don't have them…YouTube Link! https://www.youtube.com/watch?v=cMx_W5sWC78

- The more/most popular simulator for this is SUMO. Has a TCP/IP interface: http://sumo-sim.org/userdoc/Tutorials/TraCI4Traffic_Lights.html

- Another one that seems to be often-updated is MATSim

One company that does it is called Rhythm Engineering, and they implemented a system in Hillsboro OR along Cornell Road (report shows ~20 seconds reduction in travel time along the whole length, done by traffic engineering firm Kittleson and Associates). $48,000 per intersection.

- The lights talk to a central controller.

- Some features include keeping a “green tunnel” to keep the traffic on the main corridor moving smoothly.

Hashing File Names

It only makes sense for 10's of millions of files. still not sure why this limit

Normal behavior is it should be fast.

Analyzing Basketball

TopCoder

Asteroid Detection Marathon Match

- Do the Willamette Programming Competition problems need algorithms experience???

- If so, they should put a book on the website. I (and our teacher) was clueless!

- Try doing simpler versions of the rubik's cube / sudoku problem, think of edge cases and boundary conditions, and understandable, compartmentalized, and documented algorithm (visual???) code.

- Slashdot summary says main algorithm is MOPS and is free but really really slow, but another is http://derastrodynamics.com, which is not free. Interesting back story.

- TopCoder offline download tool: gettc

- Do the problem yourself first as long as you can, then slowly look for hints. Being able to doodle / draw on paper can be really helpful too.

- Tom C.'s tips should go somewhere here. http://www.quora.com/TopCoder/What-are-the-steps-to-be-taken-to-become-a-good-TopCoder/answer/Tom-Conerly

- Another good set of tips: http://www.stefan-pochmann.info/spots/tutorials/

- As well as practicing TopCoder problems (and their people's solutions) and reading their tutorials. But, keep in mind, you weren't trained as a CS major!

Interview Review

Runtimes and space usage of all algorithms and all operations.

Optional

- Fibonacci_Heap (better running time than binomial heap)